The Storm in the Machine: A.I. and the Manufacture of Necessity

Pragmatic Idealism, AI Arms-Race, and the Manufacture of Necessity

Introduction: Pragmatic Idealism and A.I.

Cole Whetstone’s two-part essay, “Pragmatic Idealism and the Logic of Lesser Evils” (New York Journal of Philosophy), offers a theory of moral action under constraint. He calls that theory pragmatic idealism. Its ambition is to hold principle and circumstance in the same frame. Kantian idealism preserves the purity of universal obligation but can falter when the world has already narrowed the field of action. Realist traditions, from Hobbes to Machiavelli, understand force, fear, and power, but can drift into the worship of necessity. Whetstone’s project is to preserve what each tradition sees clearly while resisting what each is tempted to excuse.

His key distinction is between what is possible in principle and what is actually feasible under present conditions. He calls these Can₁ and Can₂. Can₁ refers to the ideal moral outcome considered abstractly, while Can₂ refers to the outcome still available once reality has imposed its limits.

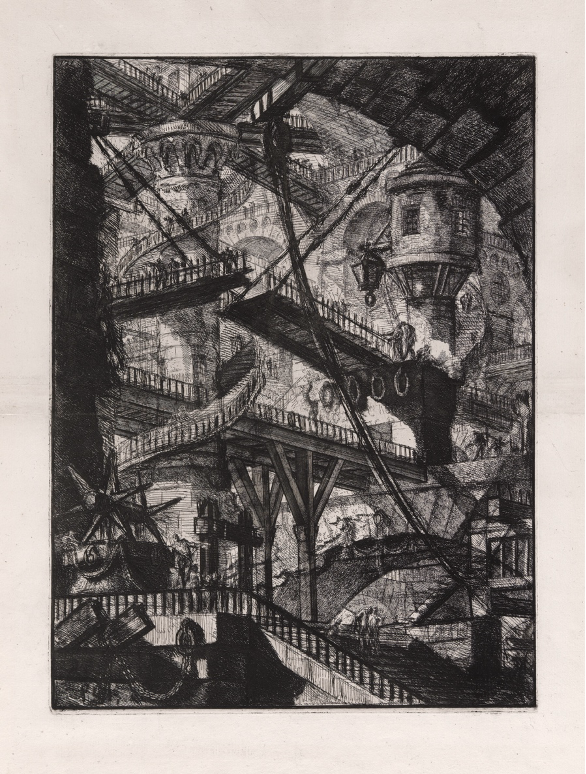

Whetstone introduces the distinction through the parable of a ship captain. During a storm, a young merchant objects when the captain throws his cargo overboard, accusing him of theft. The captain replies that the cargo is already lost. The real choice is no longer between saving and losing the cargo; the storm has erased that possibility. The choice now is between losing the cargo and saving the ship or losing both. In such circumstances, the captain does not become a thief; he chooses the lesser evil because the ideal option has disappeared.

This is the moral intelligence Whetstone wants to preserve. Constraint does not license everything. Nor can morality demand choices reality has made unavailable. The pragmatic idealist neither denies the storm nor bows before it. He sees the storm and chooses the best remaining good.

But artificial intelligence presents a harder case. Whetstone’s framework is most powerful when the storm is real and external: weather, a villain, a runaway trolley. A.I. presents a stranger moral situation; its central drama is not that we are trapped by a storm, but that the people invoking the storm are also helping to make it.

I. The Must₁/Must₂ Distinction

Whetstone extends the logic of constrained choice to the difference between the trolley case and the surgeon case.1 In the trolley case, someone will die regardless of what the bystander does. The villain has already created the constrained situation. Pulling the lever merely redirects an existing threat. In the surgeon case, killing one healthy patient to save five sick ones creates a new victim inside the plan. The healthy patient was not already caught in the structure of harm. Harvesting his organs does not manage tragedy; it manufactures it.

Whetstone formalizes this as the distinction between Must₁ and Must₂. Must₁ harm is built into the agent’s own plan, as in the surgeon case (the plan works by killing the healthy patient). Must₂ harm arises from a tragic structure already imposed, as in the trolley case. The principle is roughly this: Harm is impermissible when one’s plan requires it but may be permissible when it results from an external constraint and minimizes the damage.

This distinction is powerful because it preserves a morally important difference between causing harm and responding to harm. The bystander at the trolley lever is not inventing the danger. The surgeon is. The captain in the storm is not destroying the cargo for gain or convenience. He is acting under a constraint he did not create.

The difficulty begins when this principle leaves the clean architecture of moral thought experiments and enters a world in which constraints are made by the very agents who later invoke them. Artificial intelligence is such a case.

II. A.I. as a Manufactured Storm

A.I. is often described as a storm already upon us. The technology is here. The race has begun. The incentives are fixed. The only choice, we are told, is whether to steer or be overtaken. If one company slows down, another releases first. If one lab refuses to build the model, another will. If democratic societies hesitate, authoritarian states will seize the advantage. If open systems proliferate, closed systems must race. If labor is automated, if children form attachments to chatbots, if political reality dissolves under synthetic media, if autonomous agents behave unpredictably, if biosecurity risks rise — these may be regrettable, even tragic, consequences. But they are presented as weather.

A.I. complicates Whetstone’s framework because the storm, in this case, is being manufactured.

The central question is whether A.I. risk belongs to Must₂ or Must₁. Do the harms arise from an independently imposed constraint, as in the trolley case? Or are they built into the plan itself, as in the surgeon case?

At first glance, many A.I. harms seem like Must₂. No single developer intends mass unemployment, child dependency, political destabilization, epistemic collapse, or bioweapon enablement. The stated plan is beneficent: tools for productivity, science, education, creativity, medicine, and abundance. The harms, in this telling, arise from the wider situation: market competition, geopolitical rivalry, misuse by bad actors, and the difficulty of controlling general-purpose technology. But that answer is too easy.

III. Incentives Shape Outcomes

In A.I., the line between Must₁ and Must₂ blurs because incentives, although not intentions, can shape outcomes just as decisively. They decide which projects receive funding, which risks are tolerated, which timelines come to seem necessary, which safety checks are skipped, which harms are externalized, and which human vulnerabilities become profitable.

A company may never adopt the maxim “addict the user.” Yet if revenue depends on engagement, addiction is no longer merely accidental. It is structurally invited. A platform may never plan to polarize a country. Yet if outrage increases attention and attention increases revenue, polarization becomes part of the machine’s expected output.

This was the lesson of social media. Its Can₁ promise was connection: democratized speech, access to information, global community. Its Can₂ reality was advertising-driven engagement maximization. The platforms did not need to intend loneliness, polarization, sexualization, shortened attention spans, or the breakdown of shared reality. The system only had to optimize what it was rewarded for optimizing.

A.I. arrives in the same language of uplift: medicine, education, discovery, productivity, abundance. Its governing incentives are harsher: capability, market capture, national advantage, automation, dependency, data extraction, and strategic dominance. So the moral analysis cannot stop at what builders consciously intend. It must ask what the game rewards.

IV. The Race That Makes the Storm

A.I. development is a multi-player prisoner’s dilemma under extraordinary stakes. Each major actor may prefer, in the abstract, a safer world: slower deployment, better evaluations, restrictions on dangerous capabilities, and international coordination. Each actor also fears being overtaken. Companies fear losing talent, capital, users, market share, and prestige. States fear losing military, economic, and intelligence advantage. The result is mutual acceleration.

This is the structure of coordination failure. Restraint may be collectively rational. Defection becomes individually rational under mistrust. Everyone may know racing is dangerous; everyone may also believe that stopping means losing. Each actor experiences constraint while all actors together produce it.

Here Whetstone’s ship-captain parable begins to fail. In the original parable, the storm is external. The captain did not create it. But in A.I., the storm is partly endogenous. The race is produced by venture capital, corporate strategy, national-security doctrine, ideological accelerationism, weak liability law, and the absence of enforceable international agreements.

When an A.I. company says, “We must deploy quickly because others are racing,” it may be describing a real Can₂ constraint. But it is also helping make that constraint real. In this sense, the company becomes both captain and storm.

V. Distributed Must₁

To speak properly about the A.I. situation, we need a new term. Call it distributed Must₁: harm that no single actor names as the object of its plan, but which becomes structurally necessary within the incentive system all actors inhabit and reproduce.

Modern technological harm rarely looks like the surgeon case. No one enters the operating room and announces that an innocent person must die. Harm emerges through optimization. The plan is to “maximize engagement,” “increase capability,” “capture market share,” “reduce labor costs,” “accelerate deployment,” or “win the race.” The violation is hidden inside the objective function.

A.I. intensifies this problem because its capabilities are general. The same systems that accelerate drug discovery may accelerate biological misuse. The same systems that tutor children may manipulate them. The same systems that write code may automate cyberattacks. The same systems that personalize assistance may create emotional dependency.

This is why the benefits and dangers cannot be neatly separated. A.I. offers extraordinary upside and extraordinary downside through the same underlying capacities. A cancer drug does not protect society from an engineered pathogen. GDP growth does not compensate for epistemic collapse. Administrative efficiency means little if human beings lose the capacity to govern the systems on which they depend.

The pragmatic idealist must therefore ask two questions, not one: What is the available good? And what is the manufactured necessity?

VI. The Arms-Race Argument

One answer treats the A.I. race like the trolley problem. The trolley is already moving. Someone will build these systems. The only realistic question is whether responsible actors build them first. In this framing, restraint becomes toxic idealism, a refusal to admit that the ideal option has vanished. Better that democratic societies build powerful A.I. than authoritarian states or reckless actors.

There is force in this argument. Unilateral disarmament by the most responsible actors may be unstable in exactly the sense Whetstone identifies. In game-theoretic terms, unconditional cooperation invites defection. If one actor slows while others race, the cooperative actor may lose influence over the technology’s trajectory.

Still, the frame is incomplete. The question is not whether “we” win the A.I. race. The question is what winning means if the prize is a system no one can control. If the United States beats China to a highly autonomous system it cannot align, govern, or contain, that is merely arriving first at the loss-of-control problem.

This is the deepest weakness in arms-race logic. It assumes the main danger is being beaten by the rival. With A.I., the greater danger may be the interaction between the technology and the incentive systems into which it is released. A nation can gain external power while suffering internal decomposition, like a body that grows enormous muscles as its organs fail. A.I. may increase GDP, military capacity, cyber power, and scientific speed while also producing unemployment, dependency, surveillance, and institutional distrust. This is mutually assured political destabilization.

VII. The Failure of the Nuclear Analogy

The nuclear analogy clarifies the issue, then fails. Nuclear weapons created a relatively stark negative-sum endpoint: full exchange meant catastrophe. That clarity helped stabilize deterrence. A.I. is more seductive. It is useful, profitable, intimate, and ideological. Nuclear weapons do not promise to tutor your child, discover antibiotics, write your emails, raise earnings, or cure cancer. A.I. does. Its catastrophic risks are braided into daily utility.

That makes the game harder to stabilize. With nuclear weapons, the taboo attaches to use. With A.I., danger may attach to ordinary deployment. The boundary between civilian and military, tool and agent, assistance and dependence, research and proliferation, persuasion and manipulation is unstable from the beginning.

So the Must₁/Must₂ distinction becomes difficult in practice. If a chatbot becomes emotionally indispensable to lonely teenagers, is that an unintended side effect, or the predictable result of anthropomorphic design under retention incentives? If a coding model lowers the barrier to cybercrime, is that misuse by bad actors, or the expected consequence of releasing general code-generation capacity? If an A.I. lab trains agentic systems in competitive environments where deception is instrumentally rewarded, is deception incidental, or structurally invited by the training regime?

In each case, the builder can say, “That was not the plan.” And the critic can answer, “It was in the incentives.”

VIII. Practical Contradiction

Game theory helps explain why this matters. A strategy cannot be judged only by its local intention. It must be judged by its equilibrium behavior.2 A maxim that looks prudent in isolation may become destructive when generalized. Whetstone makes this point against pacifism: Universal pacifism may be beautiful in Can₁, yet unstable in Can₂ because it cannot resist defectors.3 The same test applies to A.I. acceleration. “Build as fast as possible so responsible actors win” may seem prudent locally. Universalized across companies and states, it produces the race that makes safety impossible.4

That is a practical contradiction. The strategy defeats its stated end. It pursues safety through speed, while speed erodes safety. It promises democratic advantage, while its internal effects may weaken democratic society. It seeks to prevent authoritarian misuse, while producing tools of surveillance, manipulation, and control attractive to every regime.

Here Kant remains useful, though not in the rigid form Whetstone criticizes.5 Kant asks whether a maxim can be universalized. Whetstone adds that the maxim must also be Can₂ viable. A.I. confirms the need for both tests. “Race to avoid being beaten” may make sense for one actor. As a universal law, it yields an arms race; it cannot will its own stated aim unless it also wills institutions strong enough to prevent racing from becoming the dominant strategy.

The Kantian question must therefore be joined to the Hobbesian one: What institutions make the better maxim stable?6

IX. Governance as the Alteration of Can₂

The point of governance is not to deny constraint, but to change the structure that produces it. International coordination, liability, capability restrictions, limits on anthropomorphic design, and a distinction between narrow defensive systems and autonomous general systems are all attempts to alter the payoff matrix. They are not refusals of Can₂ reality. They are attempts to make a better Can₂ reality possible.

This is where Whetstone’s pragmatic idealism begins to look less like a theory of emergency choice than a theory of political character. It requires sophia (theoretical wisdom) to keep sight of the good: human flourishing, agency, knowledge, democratic self-government, and the treatment of persons as ends rather than obsolete inputs. It requires phronesis (practical wisdom) to perceive the actual constraints: geopolitical rivalry, adversarial misuse, corporate incentives, technical uncertainty, and the weakness of current governance. It also requires andreia (courage), because changing the incentive structure may mean slowing profitable deployments, accepting strategic inconvenience, restricting lucrative products, and refusing to build systems that users want and markets reward.

Pragmatic idealism, applied to A.I., should become more demanding than naïve optimism or fatalistic realism. Yes, the constraints are real. China is real. Corporate competition is real. Open-source proliferation is real. Bad actors are real. The benefits of A.I. are real. But incentives are human arrangements. Markets are human arrangements. Arms races are human arrangements. They can be altered.

X. Common Knowledge Before Catastrophe

Societies often regulate only after disaster has made denial impossible. But A.I. may not offer a single bright catastrophe. Its harms may arrive cumulatively: a degraded information environment, a hollowed labor market, children raised by synthetic companions, institutions dependent on opaque systems, biological and cyber capabilities diffused beyond control, political authority weakened by synthetic reality. By the time the public recognizes the shape of the disaster, the systems may be too embedded to remove.

Public argument matters because coordination requires common knowledge. It is not enough for many people privately to suspect that the race is dangerous. Each must know that others know it too. Otherwise, private alarm remains politically inert. The function of public argument is to create common knowledge before catastrophe. Whetstone’s pragmatic idealism teaches that moral maturity requires the ability to choose the lesser evil when the ideal is unavailable. But we must first ask who made the ideal unavailable, and who benefits from declaring it gone.

The answer will not be simple. Some constraints are real. Some forms of acceleration may be justified. Some defensive uses of AI should move quickly. Some restrictions may prove naïve, unenforceable, or counterproductive. A serious A.I. politics cannot consist in chanting “pause” at every frontier. Nor can it baptize the arms race as necessity.

The task is to alter the game before the game makes our choices for us.

Conclusion: Stop Manufacturing the Storm

In the ship-captain parable, the wise captain throws the cargo overboard because the storm has made the ideal impossible. A.I. asks us to imagine a more troubling case. Suppose the storm is not weather but the consequence of the fleet’s own maneuvers. Suppose each captain insists he must continue because the others will. Suppose the merchants are told their cargo must be sacrificed, not because nature has spoken, but because the captains cannot coordinate.

In that case, the first duty is not to praise the captain for choosing the lesser evil. It is to stop manufacturing the storm.

Bibliography

Aristotle. Nicomachean Ethics. Translated by Terence Irwin. 2nd ed. Indianapolis: Hackett Publishing, 1999.

Armstrong, Stuart, Nick Bostrom, and Carl Shulman. “Racing to the Precipice: A Model of Artificial Intelligence Development.” AI & Society 31, no. 2 (2016): 201–206.

Axelrod, Robert. The Evolution of Cooperation. New York: Basic Books, 1984.

Boyd, Robert, and Jeffrey P. Lorberbaum. “No Pure Strategy Is Evolutionarily Stable in the Repeated Prisoner’s Dilemma Game.” Nature 327 (1987): 58–59.

Foot, Philippa. “The Problem of Abortion and the Doctrine of the Double Effect.” Oxford Review 5 (1967): 5–15.

Hobbes, Thomas. Leviathan. Edited by Richard Tuck. Cambridge: Cambridge University Press, 1996.

Kant, Immanuel. Groundwork of the Metaphysics of Morals. Translated and edited by Mary Gregor. Cambridge: Cambridge University Press, 1998.

Machiavelli, Niccolò. The Prince. Translated by Harvey C. Mansfield. 2nd ed. Chicago: University of Chicago Press, 1998.

Maynard Smith, John, and George R. Price. “The Logic of Animal Conflict.” Nature 246 (1973): 15–18.

Nash, John. “Equilibrium Points in N-Person Games.” Proceedings of the National Academy of Sciences 36, no. 1 (1950): 48–49.

Thomson, Judith Jarvis. “Killing, Letting Die, and the Trolley Problem.” The Monist 59, no. 2 (1976): 204–217.

Thomson, Judith Jarvis. “The Trolley Problem.” Yale Law Journal 94, no. 6 (1985): 1395–1415.

Whetstone, Cole. “Pragmatic Idealism and the Logic of Lesser Evils (Part I): A Solution to the Trolley Problem.” New York Journal of Philosophy, April 15, 2026.

Whetstone, Cole. “Pragmatic Idealism and the Logic of Lesser Evils (Part II): Wish, Choice, and the Stability of Moral Principles.” New York Journal of Philosophy, April 22, 2026.

Haley Moller graduated from Yale College in 2023 with a degree in English. She now lives and works in San Francisco, where she writes about technology and the ethics of artificial intelligence.

The trolley case originates with Philippa Foot’s discussion of abortion and the doctrine of double effect, where she asks whether it is permissible to divert a runaway trolley so that it kills one person rather than five. Judith Jarvis Thomson later developed the case and contrasted it with the “transplant” or “surgeon” case, in which a doctor kills one healthy patient to distribute his organs to five patients who would otherwise die. See Philippa Foot, “The Problem of Abortion and the Doctrine of the Double Effect,” Oxford Review 5 (1967): 5–15; Judith Jarvis Thomson, “Killing, Letting Die, and the Trolley Problem,” The Monist 59, no. 2 (1976): 204–217; and Judith Jarvis Thomson, “The Trolley Problem,” Yale Law Journal 94, no. 6 (1985): 1395–1415.

This is the basic insight of noncooperative game theory: The moral or strategic significance of an action depends not only on what one actor intends, but on what pattern of behavior the strategy generates when adopted by many agents under conditions of mutual expectation. See John Nash, “Equilibrium Points in N-Person Games,” Proceedings of the National Academy of Sciences 36, no. 1 (1950): 48–49.

Whetstone’s pacifism example draws on the prisoner’s dilemma tradition, especially Robert Axelrod’s account of how unconditional cooperation can be exploited by defectors and how reciprocal strategies can stabilize cooperation under non-ideal conditions. See Robert Axelrod, The Evolution of Cooperation (New York: Basic Books, 1984). For the related concept of evolutionary stability, see John Maynard Smith and George R. Price, “The Logic of Animal Conflict,” Nature 246 (1973): 15–18. Whetstone applies this logic to pacifism as a case of “toxic idealism”: an ideal that remains possible in principle but fails under adversarial conditions.

A.I. acceleration has been modeled in similar terms: When several actors race to develop a powerful technology, each may have an incentive to cut corners on safety in order to finish first, even if all would prefer a safer collective outcome. See Stuart Armstrong, Nick Bostrom, and Carl Shulman, “Racing to the Precipice: A Model of Artificial Intelligence Development,” AI & Society 31, no. 2 (2016): 201–206.

Kant’s first formulation of the categorical imperative requires one to “act only according to that maxim whereby you can at the same time will that it should become a universal law.” Whetstone’s contribution is to argue that universalizability is insufficient unless the maxim is also feasible under actual conditions: not merely Can₁-consistent but Can₂-viable. The AI arms-race maxim exposes this gap. “Race so that responsible actors win” may be locally intelligible, but when universalized across firms and states, it generates the very competitive structure that makes responsible development harder. See Immanuel Kant, Groundwork of the Metaphysics of Morals, trans. Mary Gregor (Cambridge: Cambridge University Press, 1998), 4:421; Cole Whetstone, “Pragmatic Idealism and the Logic of Lesser Evils (Part II): Wish, Choice, and the Stability of Moral Principles,” New York Journal of Philosophy, April 22, 2026.

See Immanuel Kant, Groundwork of the Metaphysics of Morals, trans. Mary Gregor (Cambridge: Cambridge University Press, 1998), 4:421; Thomas Hobbes, Leviathan, ed. Richard Tuck (Cambridge: Cambridge University Press, 1996), chs. 13–17.